How Entelligence Helped Clodo Ship Faster With Confidence

Apr 28, 2026

BACKGROUND

Clodo builds AI-powered people search.

Clodo is a B2B SaaS company whose platform uses AI to help sales and growth teams find, qualify, and reach the right people faster. With a small, fast-moving engineering team, the pace of shipping is a competitive advantage. Their developers already relied on Claude and Cursor as daily coding tools.

THE PROBLEM

Shipping fast was creating blind spots.

AI coding assistants are built to generate code, not review it. As Clodo's codebase grew across external API integrations, OAuth flows, and data pipelines, so did the surface area for subtle issues that slipped past both automated checks and manual review. Incidents were being missed. Code reviews lacked context. Problems were reaching production.

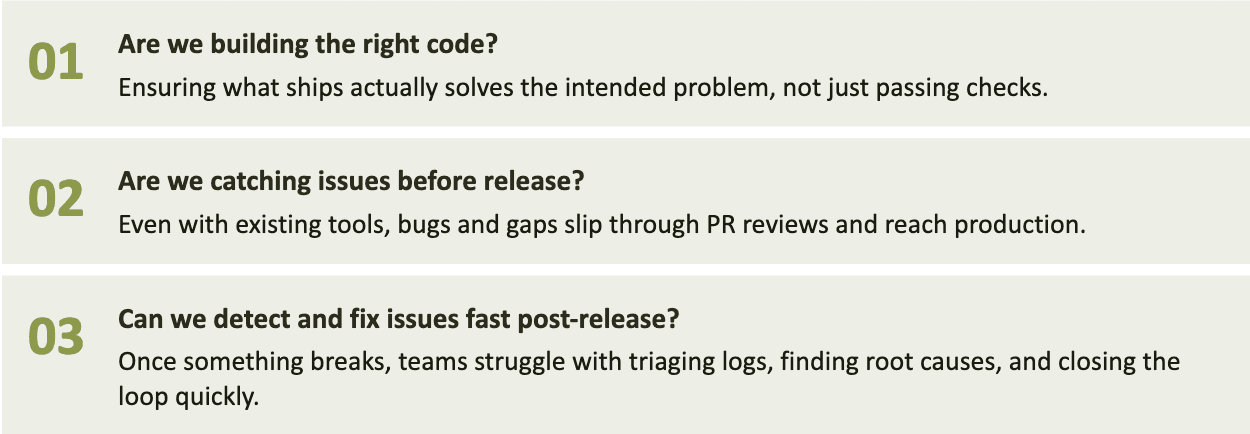

Rithvik Chuppala, Co-founder and CTO, identified three problems every engineering leader faces. Entelligence addresses all three.

HOW ENTELLIGENCE FITS IN

A second set of eyes on every PR.

What separates Entelligence from other review tools is its self-learning loop; every incident gets indexed as precedent, so the next PR gets reviewed against Clodo's own history of what has actually broken.

CLI-based code review keeps feedback tight without breaking flow

Incidents dashboard connects production failures to the code that caused them no manual log triage

The loop compounds. Observe, review, remediate, learn. Every incident makes the next one less likely.

RESULTS

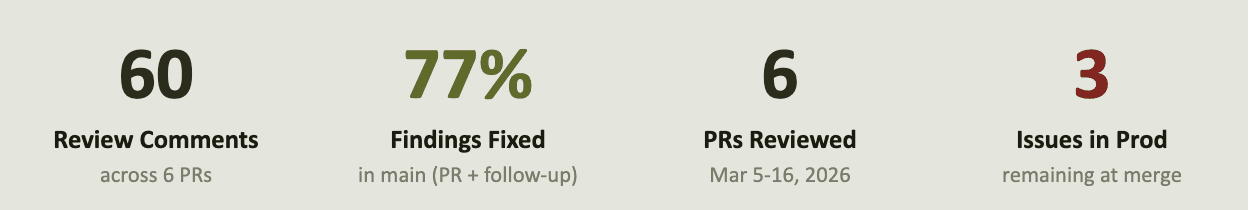

77% of findings drove code changes.

GitHub's native acceptance signal tracked 3.3% of findings as resolved. The real number is 77%. The gap exists because Clodo's engineers fix issues in follow-up commits after merge rather than resolving threads inline. Entelligence's analysis tracks changes at HEAD across the full codebase.

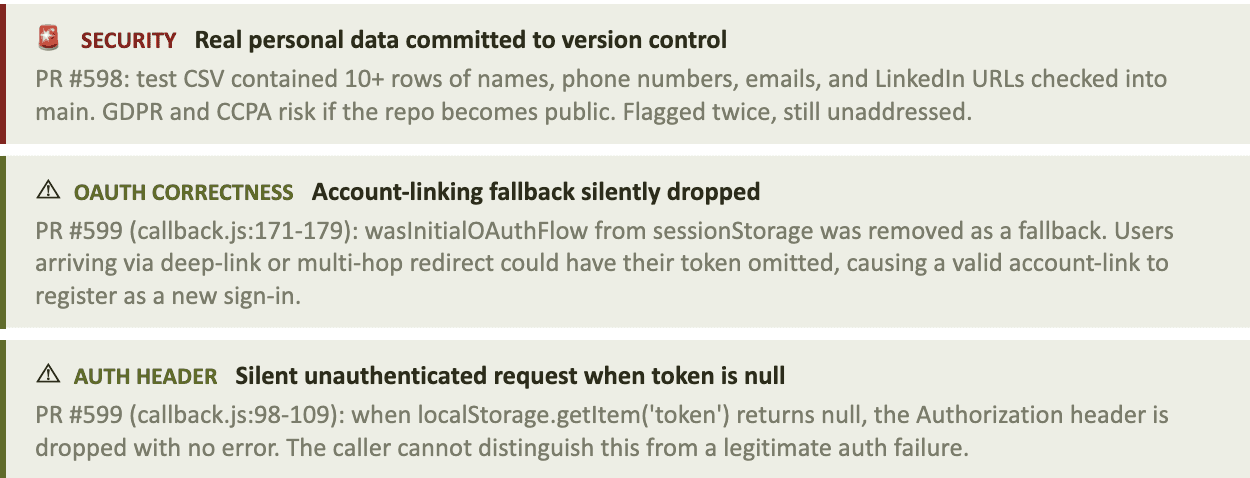

WHAT WAS CAUGHT

Security, OAuth, and real PII in production.

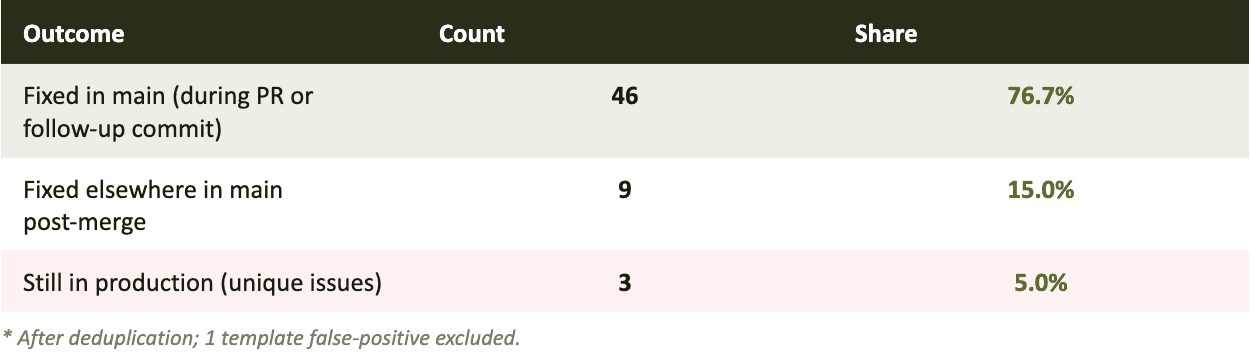

Across 6 PRs, 46 findings drove fixes. Three remain open and illustrate where generative AI tools fall short.

Beyond the open issues, here is a sample of what the other 46 findings drove in the codebase:

Area | What was fixed |

|---|---|

Security | CSV escapeCSV utility had inconsistent escaping across 4 call sites. One path allowed a value containing quotes to corrupt export output. Consolidated in PR #591. |

API Hardening | Bettercontact, Apollo, RapidAPI, and FindyMail clients had error propagation and rate-limiter behavior hardened post-merge in PR #598. |

Concurrency | scrape_linkedin_profiles_parallel had connection-pool and concurrency handling issues flagged and fixed in subsequent commits. |

AI Runtime | _run_people_finder_openai and _run_planning_turn_openai had error-handling consolidations land in main after merge (PR #598). |

Frontend | leads.js:148-304 received multiple data-handling fixes. Sent-dispatch loading handlers and formatTime / truncate utilities were extracted post-merge (PR #594). |

The value shows up in the code.

AI generation tools help engineers move fast. Entelligence operates in the gap they leave: reviewing what has already been written, surfacing the issues that are hardest to see when you are close to the work.

Produced 46 code improvements and caught 3 real issues before they compounded. The value landed in follow-up commits, not in GitHub acceptance rates. That is the workflow working.

Faster shipping. Fewer incidents. A team that trusts every PR.