From Noise to Signal: How Hobbes Built a Code Review Process Engineers Actually Trust

THE PROBLEM

Surface-level review was just noise

Hobbes builds AI-native collaboration tooling. Their engineering team moves fast, ships often, and generates hundreds of pull requests per sprint. Like many modern engineering teams, they were looking to bring AI into their code review workflow.

They started with Claude. For a while, it helped. But over the following months, a pattern emerged. The feedback was too general. Comments flagged style preferences or minor formatting issues. They rarely surfaced anything structural nothing that would actually stop a bug from reaching production. Engineers started skimming the review output. Then they stopped reading it altogether.

When review feedback does not change engineer behavior, it is not functioning as a safety net. It is noise. The team needed something different not a tool that checks boxes, but a reviewer that actually understands the codebase, learns from what goes wrong, and flags what matters.

THE SWITCH

Switching to Entelligence

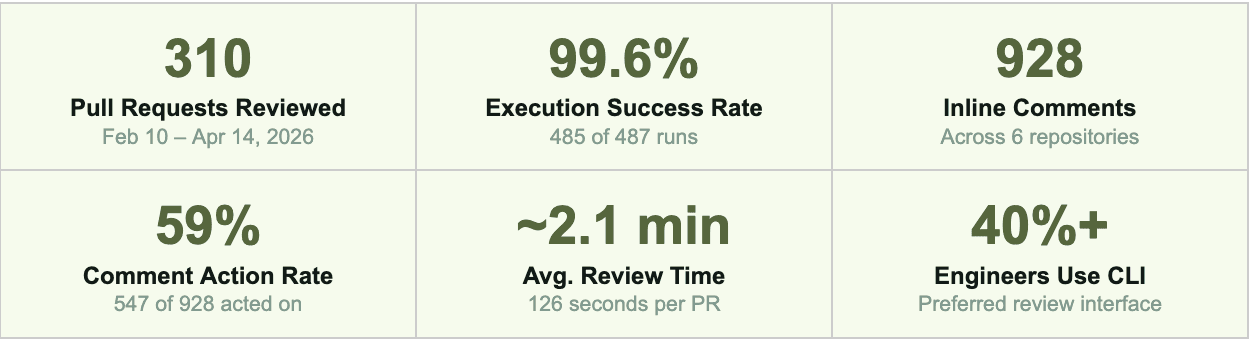

About a month ago, Hobbes switched to Entelligence AI for code review. The decision was not primarily about features. It was about whether engineers would actually engage with the output.

The difference was immediate. Entelligence reviewed pull requests with the kind of precision that comes from deep codebase context: flagging security vulnerabilities, catching race conditions, identifying broken state transitions. The feedback was specific, reproducible, and tied directly to the code at hand. The team noticed quickly. Engineers were not just reading the comments they were acting on them.

THE LEARNING LOOP

The self-learning loop

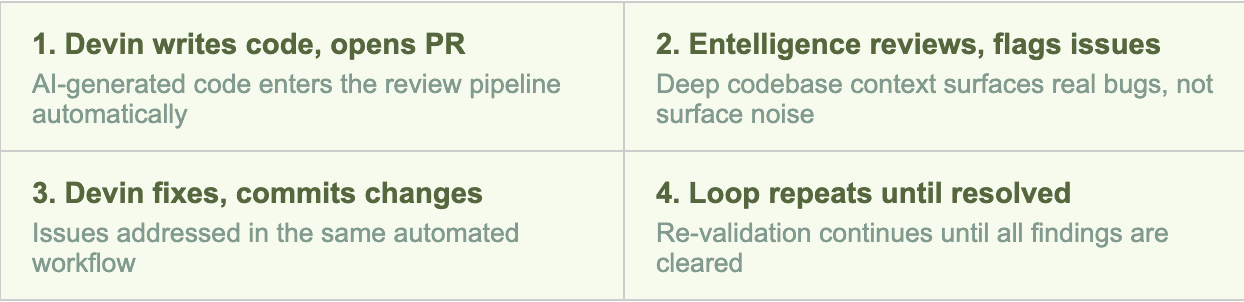

Every PR review builds on what came before. Entelligence tracks patterns across the codebase over time: what types of bugs appear in which services, how they were fixed, what edge cases were missed in prior reviews. When it reviews a new PR, it draws on that accumulated history to produce comments informed by actual incidents not generic heuristics.

This matters especially for a team using Devin, an AI coding agent, as part of their development workflow. Every major security vulnerability and logic error identified in Hobbes’s review period was introduced by AI-generated code.

TRUST & ADOPTION

Engineers trust it enough to use it

Adoption is the most honest signal that a tool is working. At Hobbes, more than 40% of engineers use the Entelligence CLI for their code reviews. That is not a metric that comes from a mandate it comes from engineers deciding that the tool saves them time and catches things they care about.

Across 310 pull requests reviewed between February and April 2026, the team acted on 547 of 928 total inline comments, a 59% action rate. 313 of those were accompanied by explicit developer acknowledgments. In 38% of all reviewed PRs, at least one comment prompted a concrete change.

WHAT GOT CAUGHT

10 high/critical issues caught before production

Across the review period, Entelligence identified 10 issues of High or Critical severity before any of them reached production. Every one was introduced by AI-generated code.

Issue | Severity | Status |

Path Traversal in File Upload | Critical | Fixed |

Pending File Chooser State Corruption | Critical | Fixed |

Prompt Injection via Unbounded Input | High | Fixed |

Race Conditions in Session Recovery | High | Fixed |

Storage Deletion Before DB Delete | High | Addressed |

get_prompt_review None Crash | High | Fixed |

REMOVE-Type Change Handling Bug | High | Fixed |

Missing Organization Access Check | High | Addressed |

Pre-signed URL Logged at INFO Level | Medium | Addressed |

Event Flush Race Condition | High | Addressed |

TOP ENGAGEMENTS

Repository | Comments | Acted On | Rate |

hobbesBackend | 34 | 33 | 97% |

hobbesBackend | 63 | 45 | 71% |

hobbesBackend | 46 | 35 | 76% |

hobbesBackend | 47 | 34 | 72% |

hobbesBackend | 70 | 48 | 69% |

|