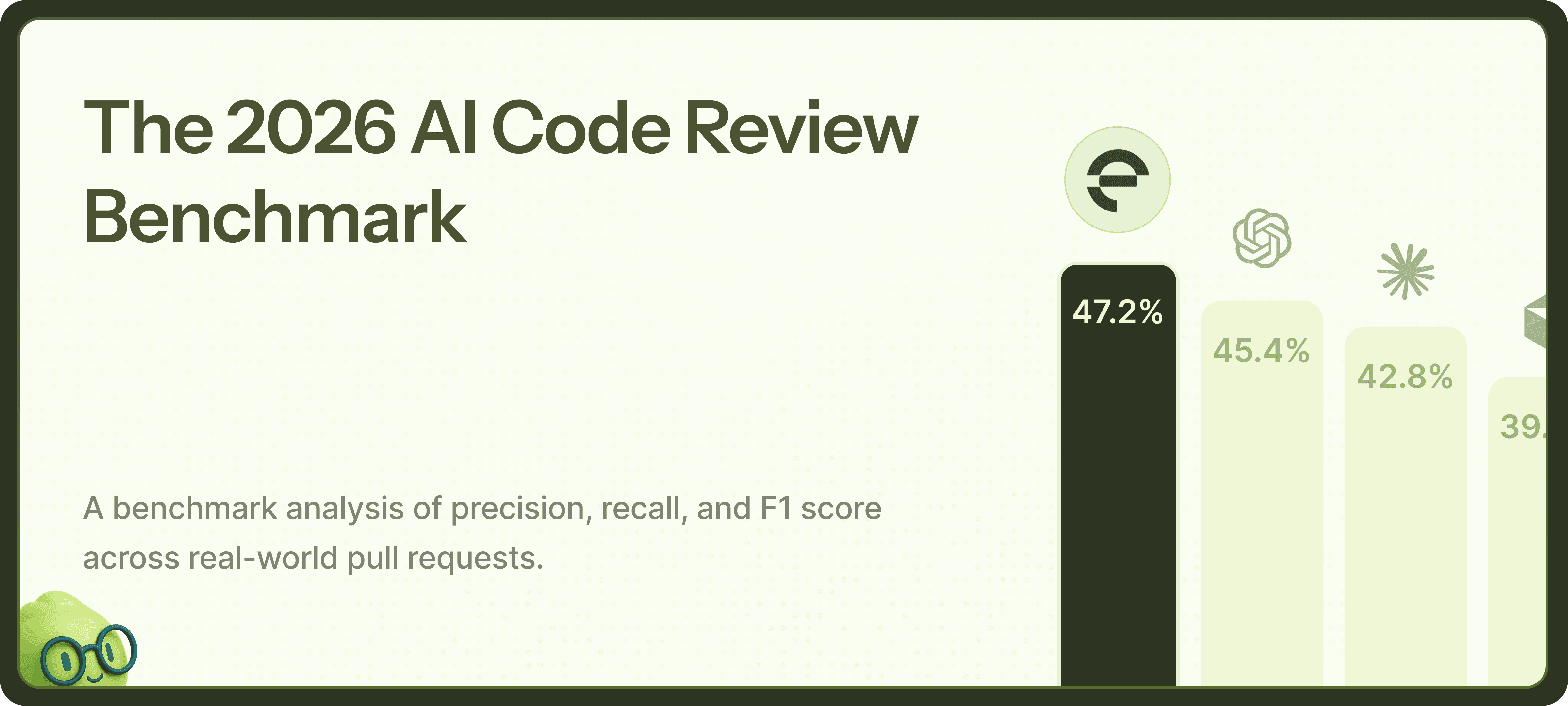

Entelligence Code Review Benchmark 2026

Entelligence was benchmarked against 7 leading AI code review tools (CodeRabbit, Greptile, Copilot, Graphite, Codex, Claude, and Bugbot) on real

production bugs.

Introduction

AI code review tools promise to catch bugs before production. But do they actually work?

We tested 8 AI code reviewers in total: Entelligence, CodeRabbit, Greptile, Copilot, Graphite, Claude, Codex, and Bugbot on 67 real production

bugs from open-source projects. Each bug shipped to production broke something and required a fix in a later commit.

The bugs included race conditions, security vulnerabilities, breaking API changes, and logic errors across five different repositories: Cal.com (TypeScript), Sentry (Python), Discourse (Ruby), Keycloak (Java), and Grafana (Go).

Results (F1 scores)

Full results table

| Rank | Reviewer | F1 Score | Found/Golden |

|---|---|---|---|

| 1 | Entelligence | 47.2% | 30/67 |

| 2 | Codex | 45.4% | 27/67 |

| 3 | Claude | 42.8% | 29/67 |

| 4 | Bugbot | 39.4% | 31/67 |

| 5 | Greptile | 36.9% | 31/67 |

| 6 | CodeRabbit | 33.0% | 32/65 |

| 7 | Copilot | 22.6% | 23/67 |

| 8 | Graphite | 13.4% | 5/67 |

See The Proof

Methodology

Building a Golden Dataset for Code Review Evaluation

Benchmarking AI code reviewers requires a ground truth: a set of golden comments representing what a thorough, context-aware review should look like. Catch rate alone - whether a tool flags a known bug—doesn't capture comment quality, relevance, or actionability. We set out to build a golden dataset that could measure these dimensions.

An Ensemble Approach to Ground Truth

Rather than relying on a single model or a single human's judgment, we built our golden dataset using an ensemble of frontier LLMs: Claude Opus, Gemini 2.5, and GPT-5. Each model received identical context for every pull request diff - including the inbound and outbound dependencies of the changed code. We extracted these dependencies using Language Server Protocol (LSP), which allowed us to programmatically trace function calls, imports, and type relationships across the codebase. This dependency context is critical; it's what separates superficial line-by-line feedback from reviews that understand how a change ripples through a codebase.

We prompted each model independently to generate review comments. This gave us three distinct perspectives on every diff, each shaped by the model's own reasoning patterns and training.

Consensus Through Voting

Three models means three (often different) sets of comments. To distill these into a single golden dataset, we implemented a majority voting process. A separate LLM call - GPT-4o - acted as the arbiter, evaluating which comments across the three sources addressed the same issues and selecting those with majority agreement. Comments that only one model flagged were discarded; comments that two or three models independently identified became candidates for the golden set.

This voting mechanism serves two purposes: it filters out model-specific hallucinations or stylistic quirks, and it surfaces issues that multiple sophisticated models agree are worth flagging. If Opus, Gemini, and GPT-5 all independently identify the same problem, that's a strong signal it belongs in the ground truth.

Human Validation

LLM consensus isn't infallible. To ensure quality, reviewers at our company manually examined the voting-selected comments. This human validation step caught edge cases where models agreed on something incorrect or where the voting process produced ambiguous results. The final golden dataset represents the intersection of multi-model consensus and human expert judgment.

Through this process, we generated 67 golden comments. We used the same repositories featured in Greptile's benchmark - Sentry, Cal.com, Grafana, Keycloak, and Discourse - so our results are directly comparable to existing industry benchmarks. The full dataset, including all golden comments, is available in our repository.

Evaluation Methodology

With our golden dataset established, we ran the benchmark. We cloned each repository and opened pull requests mirroring the original bug-introducing commits. We triggered each AI code review tool (Codex, Greptile, CodeRabbit, Entelligence, Graphite, Copilot, Claude Code, and Bugbot) on these PRs under default settings, excluding dependency bot PRs to focus on meaningful code changes.

For each tool, we collected every review comment and compared it against our golden comments using Claude Sonnet 4.5 as a semantic similarity judge. The LLM evaluated whether each AI-generated comment captured the same issue and intent as the corresponding golden comment.

Combined Evaluation Analysis

1. Executive Summary

Aggregate Metrics (All Repositories)

| Rank | Reviewer | F1 Score | Recall | Precision | Found/Golden |

|---|---|---|---|---|---|

| 1 | Entelligence | 47.2% | 44.8% | 50.0% | 30/67 |

| 2 | Codex | 45.4% | 40.3% | 51.9% | 27/67 |

| 3 | Claude | 42.8% | 43.3% | 42.3% | 29/67 |

| 4 | Bugbot | 39.4% | 46.3% | 34.4% | 31/67 |

| 5 | Greptile | 36.9% | 46.3% | 30.7% | 31/67 |

| 6 | CodeRabbit | 33.0% | 49.2% | 24.8% | 32/65 |

| 7 | Copilot | 22.6% | 34.3% | 16.8% | 23/67 |

| 8 | Graphite | 13.4% | 7.5% | 66.7% | 5/67 |

* Note: CodeRabbit was evaluated on 65 total PRs (14 out of 16 in Cal.com) due to PR/dataset availability during testing. All other tools were evaluated on the full 67 PRs.

2. Per-Repository Performance

entelligence Performance by Repository

| Rank | Repository | F1 | Recall | Precision | Found/Golden |

|---|---|---|---|---|---|

| 1 | Cal.com | 68.1% | 81.2% | 58.6% | 13/16 |

| 2 | Grafana | 50.0% | 33.3% | 100.0% | 3/9 |

| 3 | Sentry | 45.5% | 29.4% | 100.0% | 5/17 |

| 4 | Keycloak | 38.5% | 33.3% | 45.5% | 4/12 |

| 5 | Discourse | 30.3% | 38.5% | 25.0% | 5/13 |

Cal.com - Issue Detection Matrix

Summary Metrics

| Rank | Reviewer | F1 Score | Recall | Precision | Found/Golden |

|---|---|---|---|---|---|

| 1 | Entelligence | 68.1% | 81.2% | 58.6% | 13/16 |

| 2 | Codex | 58.8% | 56.2% | 61.5% | 9/16 |

| 3 | Claude | 52.2% | 50.0% | 54.5% | 8/16 |

| 4 | Bugbot | 50.0% | 68.8% | 39.3% | 11/16 |

| 5 | Greptile | 47.4% | 56.2% | 40.9% | 9/16 |

| 6 | CodeRabbit | 46.0% | 78.6% | 32.6% | 11/14 |

| 7 | Copilot | 37.0% | 62.5% | 26.3% | 10/16 |

| 8 | Graphite | 0.0% | 0.0% | 0.0% | 0/16 |

* Note: CodeRabbit was evaluated on 65 total PRs (14 out of 16 in Cal.com) due to PR/dataset availability during testing. All other tools were evaluated on the full 67 PRs.

Per-PR Issue Detection Matrix

* The ↗ symbol indicates the tool successfully scanned and commented on the PR. It does not indicate whether the tool successfully found the specific golden bug. Please refer to the summary tables for exact detection rates.

Cal.com Issues (16 total)

Tip: Click any ✓/✗ to view the PR evidence.

| PR | Bug Description | Severity | Entelligence | Claude | Codex | CodeRabbit | Greptile | Copilot | Graphite | Bugbot |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | Unscoped deleteMany condition will delete any WorkflowReminder with retryCount > 1 regardless of method or date, causing unintended data loss. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 2 | Immediate cancel path does not update the database; reminder remains in DB neither deleted nor marked cancelled, leaving a stale record. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 3 | Working hours check computes end time from slotStartTime instead of slotEndTime, allowing slots that extend beyond working hours. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 4 | Wrong timezone passed to availability check: organizerTimeZone is set to schedule.timeZone (likely attendee TZ), causing incorrect date override calculations. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 5 | Type mismatch: constructor expects InsightsBookingServiceOptions but accepts InsightsBookingServicePublicOptions which lacks validation | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 6 | parseRefreshTokenResponse returns an object with .data property, but code assigns it directly to key without accessing .data | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 7 | Invalid Zod schema: using computed property names in z.object causes the parser to never match real token responses, breaking refresh flows when credential syncing is enabled. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 8 | Inconsistent return type: returns a fetch Response when syncing is enabled and returns the provider-specific object otherwise. Callers expecting a consistent shape (e.g., expecting .data) will crash at runtime. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 9 | Authorization check requires user to be BOTH team admin AND team owner; should allow either. This will wrongly forbid legitimate admins/owners from adding guests. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 10 | handleAdd checks multiEmailValue.length === 0 but initial state is [''], so clicking Add without input triggers Zod validation error instead of early return. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 11 | Logical error in selectedCalendar assignment - will always be undefined when externalCalendarId is provided | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 12 | Possible undefined access on destinationCalendar: reading integration on undefined will crash when no destinationCalendar is set. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 13 | Wrong fallback when computing calendarId for deletion: if externalCalendarId is undefined, find() compares against undefined and returns undefined, causing events.delete to receive an undefined calendarId. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 14 | destinationCalendar may be null and later team calendars are pushed conditionally with optional chaining, resulting in lost team destination calendars for COLLECTIVE events when organizer/eventType has none. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 15 | Calling updateMany with an empty data object will throw a Prisma error at runtime, causing the operation to fail. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 16 | forEach with async callback doesn't wait for promises to complete, causing deleteEvent/deleteMeeting to potentially not execute before the function continues | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

Key Findings for Cal.com

- Strong critical issue detection (5/6 = 83%)

- Catches complex OAuth token handling issues

- Identifies async/await problems (forEach with async)

- Good at null/undefined access patterns

- Generates more false positives than codex (12 vs 5)

- Missed timezone-related issue (organizerTimeZone)

- Some false positives are valid issues not in golden set

Discourse – Issue Detection Matrix

Summary Metrics

| Rank | Reviewer | F1 Score | Recall | Precision | Found/Golden |

|---|---|---|---|---|---|

| 1 | Bugbot | 44.4% | 46.2% | 42.9% | 6/13 |

| 2 | Codex | 38.1% | 30.8% | 50.0% | 4/13 |

| 3 | Greptile | 35.7% | 46.2% | 29.2% | 6/13 |

| 4 | Entelligence | 30.3% | 38.5% | 25.0% | 5/13 |

| 5 | Claude | 26.4% | 30.8% | 23.1% | 4/13 |

| 6 | CodeRabbit | 25.9% | 53.8% | 17.0% | 7/13 |

| 7 | Graphite | 25.0% | 15.4% | 66.7% | 2/13 |

| 8 | Copilot | 11.0% | 15.4% | 8.6% | 2/13 |

Per-PR Issue Detection Matrix

Discourse Issues (13 total)

| PR | Bug Description | Severity | Entelligence | Claude | Codex | CodeRabbit | Greptile | Copilot | Graphite | Bugbot |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | add_members expects param :group_id but the route is /admin/groups/:id/members, so params[:group_id] will be missing and raise ParameterMissing | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 2 | totalPages calculation is off by one; using Math.floor(...)+1 overcounts when user_count is an exact multiple of limit | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 3 | Syntax error: 'end if' is invalid Ruby syntax | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 4 | Possible nil dereference; i.content can be nil for many feeds, so calling scrub on it will raise NoMethodError. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 5 | Null pointer exception when current_user is nil - accessing current_user.id without checking if user is logged in | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 6 | Nil dereference when TopicUser record does not exist; calling methods on nil will raise an exception for users without a TopicUser row. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 7 | Null pointer exception when host is not found | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 8 | Migration inserts raw host values (including http/https scheme and paths) into embeddable_hosts without normalization, causing host matching to fail after migration. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 9 | SQL injection vulnerability. h from site_settings is interpolated directly into raw SQL ('#{h}'). Use parameterized query or sanitize input to prevent arbitrary SQL execution. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 10 | NoMethodError before_validation in EmbeddableHost | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 11 | Inverted lightness values - using 30% for light theme and 70% for dark theme will make text darker in light theme and lighter in dark theme, opposite of intended behavior | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 12 | Custom fallback order omits parent locale fallback (e.g., 'pt-BR' -> 'pt'), which will produce missing translations where base-language strings exist. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 13 | convert_with returns nil on success, causing resize/downsize to be treated as failures and preventing thumbnail/optimized image creation. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

Key Findings for Discourse

- Catches SQL injection vulnerability (security-critical)

- Identifies null pointer exceptions

- Good at localization/i18n issues

- Missed Ruby syntax errors

- Lower recall than CodeRabbit and Greptile on this codebase (Ruby)

- Misses some nil dereference patterns

- CSS/theme-related issues completely missed

Grafana Repository (9 Golden Issues)

Grafana – Issue Detection Matrix

Summary Metrics

| Rank | Reviewer | F1 Score | Recall | Precision | Found/Golden |

|---|---|---|---|---|---|

| 1 | Codex | 64.5% | 66.7% | 62.5% | 6/9 |

| 2 | Claude | 56.3% | 55.6% | 57.1% | 5/9 |

| 3 | Entelligence | 50.0% | 33.3% | 100.0% | 3/9 |

| 4 | CodeRabbit | 44.4% | 33.3% | 66.7% | 3/9 |

| 5 | Graphite | 44.4% | 33.3% | 66.7% | 3/9 |

| 6 | Bugbot | 33.8% | 44.4% | 27.3% | 4/9 |

| 7 | Greptile | 31.6% | 66.7% | 20.7% | 6/9 |

| 8 | Copilot | 23.5% | 33.3% | 18.2% | 3/9 |

Per-PR Issue Detection Matrix

Grafana Issues (9 total)

| PR | Bug Description | Severity | Entelligence | Claude | Codex | CodeRabbit | Greptile | Copilot | Graphite | Bugbot |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | recordStorageDuration called with 'name' instead of 'options.Kind' as third parameter | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 2 | Race condition: Multiple concurrent requests could pass the device count check simultaneously and create devices beyond the limit. Consider using a database transaction or lock. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 3 | Race condition: entryPointAssetsCache can be read without lock after RUnlock | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 4 | Init() is called during NewResourceServer() constructor, but Init() uses s.search which may be nil if opts.Search.Resources is nil | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 5 | Index creation is no longer synchronized, allowing concurrent BuildIndex calls for the same key to run bleve.New on the same directory, which can fail and break initialization. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 6 | Logic error: function always returns false regardless of feature flag state | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 7 | RunCommands is a stub that always returns an error, causing TablesList() to fail at runtime. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 8 | The enableSqlExpressions function has flawed logic that always returns false, effectively disabling SQL expressions unconditionally: | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 9 | QueryFramesInto is a stub that always returns an error, causing SQLCommand.Execute to fail. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

Key Findings for Grafana

- Perfect precision (100%) - no false positives

- Catches initialization nil issues

- Good at identifying parameter mismatches

- Low recall (33.3%) on Go codebase

- Misses race condition issues (0/3)

- Misses stub function issues

Keycloak Repository (12 Golden Issues)

Keycloak – Issue Detection Matrix

Summary Metrics

| Rank | Reviewer | F1 Score | Recall | Precision | Found/Golden |

|---|---|---|---|---|---|

| 1 | Greptile | 52.6% | 41.7% | 71.4% | 5/12 |

| 2 | Claude | 45.5% | 41.7% | 50.0% | 5/12 |

| 3 | Entelligence | 38.5% | 33.3% | 45.5% | 4/12 |

| 4 | Bugbot | 24.0% | 25.0% | 23.1% | 3/12 |

| 5 | CodeRabbit | 22.2% | 41.7% | 15.2% | 5/12 |

| 6 | Copilot | 18.7% | 25.0% | 15.0% | 3/12 |

| 7 | Codex | 18.2% | 16.7% | 20.0% | 2/12 |

| 8 | Graphite | 0.0% | 0.0% | 0.0% | 0/12 |

Per-PR Issue Detection Matrix

Keycloak Issues (12 total)

| PR | Bug Description | Severity | Entelligence | Claude | Codex | CodeRabbit | Greptile | Copilot | Graphite | Bugbot |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | Null pointer exception when webauthnAuth is null and user is not null | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 2 | Undefined method isConditionalPasskeysEnabled() used in condition; UsernameForm does not declare this method nor inherit it, causing a compilation failure. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 3 | The anchor tag validation logic is order-dependent and will fail on valid localizations where sentence fragments are reordered. The implementation iterates through anchor tags in both the original and translated strings simultaneously, requiring a strict sequential match. This is an incorrect assumption for translations and will cause incorrect build failures. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 4 | The regex used to detect HTML tags is case-sensitive and only matches lowercase tags. If an English message contains uppercase HTML tags (e.g., '<B>'), HTML will not be detected, causing a strict no-HTML policy to be applied to its translations. This will incorrectly reject valid translations that use lowercase HTML, causing a build failure. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 5 | Incorrect ID is used to identify groups, which will break user searches based on group permissions. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 6 | The final permission filter on the user search stream was removed, causing users with direct view permissions (but not via a group) to be omitted from search results. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 7 | getClientsWithPermission may include the resource-type resource ("Clients") in the returned set, not just individual client identifiers, producing wrong results when a global permission exists. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 8 | getResourceTypeResource() can return null, causing NPE at line 219 in findByResource(server, resource) | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 9 | Two unsafe .get() calls throw NoSuchElementException if credentialModelOpt is empty or getNextRecoveryAuthnCode() returns empty (when all codes used) | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 10 | getMyUser(user) returns null if user not in map (see line 310 check), causing NPE at myUser.recoveryCodes/myUser.otp | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 11 | readNext() can cause infinite loop when length calculation is incorrect | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 12 | Duplicate null check on 'grantType' instead of checking 'rawTokenId' | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

Key Findings for Keycloak

- Catches HTML sanitizer issues (validation order, case sensitivity)

- Identifies authorization ID mismatches

- Reasonable precision (36.4%)

- Low critical issue detection (20%)

- Missed passkey authentication bugs

- Missed duplicate null check bug

Sentry Repository (17 Golden Issues)

Summary Metrics

| Rank | Reviewer | F1 Score | Recall | Precision | Found/Golden |

|---|---|---|---|---|---|

| 1 | Codex | 46.2% | 35.3% | 66.7% | 6/17 |

| 2 | Entelligence | 45.5% | 29.4% | 100.0% | 5/17 |

| 3 | CodeRabbit | 37.5% | 35.3% | 40.0% | 6/17 |

| 4 | Bugbot | 35.0% | 41.2% | 30.4% | 7/17 |

| 5 | Claude | 34.3% | 41.2% | 29.4% | 7/17 |

| 6 | Greptile | 24.5% | 29.4% | 21.1% | 5/17 |

| 7 | Copilot | 19.7% | 29.4% | 14.8% | 5/17 |

| 8 | Graphite | 0.0% | 0.0% | 0.0% | 0/17 |

Sentry Issues (17 total)

| PR | Issue | Severity | Entelligence | Claude | Codex | CodeRabbit | Greptile | Copilot | Graphite | Bugbot |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | A message is marked as complete even if processing fails, leading to data loss as the offset for the failed message will be committed. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 2 | The shutdown logic is incorrect as `queue.Queue` has no `shutdown` method. This causes an `AttributeError`, preventing graceful shutdown and leading to the loss of all in-flight messages in the queues. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 3 | Accessing nested dictionary keys without null checks will throw AttributeError when contexts or error_sampling are None or missing | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 4 | The code attempts to use a negative offset for queryset slicing when `cursor.is_prev` is true. Django ORM does not support negative indexing and will raise a `ValueError`, causing a 500 server error. This is a regression in the existing `DateTimePaginator`. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 5 | OptimizedCursorPaginator.get_item_key uses floor/ceil on a datetime key (order_by='-datetime'), causing TypeError. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 6 | The code attempts to use a negative offset for queryset slicing. Django ORM does not support negative indexing and will raise a `ValueError: Negative indexing is not supported.`. The comment indicating this is safe is incorrect. This will cause API requests using this new pagination feature to fail with a 500 error. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 7 | Direct dictionary access on `val["end_timestamp_precise"]` will raise a `KeyError` if the field is missing from the Kafka message payload. This will cause the consumer to repeatedly crash and halt processing for the entire partition, leading to data loss and an outage for span ingestion. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 8 | Changing from `sscan` to `zscan` on the same Redis key pattern will cause failures during deployment. Old keys of type `set` will cause `zscan` to fail with a `WRONGTYPE` error, which will crash the `flush_segments` task and lead to data loss as buffered spans will not be processed before their TTL expires. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 9 | Calling kill() on multiprocessing.Process without checking if process is alive first | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 10 | On shutdown, flusher processes may not be terminated due to the same incorrect isinstance check, causing orphaned processes and resource leaks. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 11 | The class `MetricAlertDetectorHandler` inherits from the abstract class `StatefulDetectorHandler` but fails to implement its abstract methods: `get_dedupe_value`, `get_group_key_values`, and `build_occurrence_and_event_data`. Any attempt to instantiate this handler will raise a `TypeError`, causing a crash when processing metric alert detectors. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 12 | The code attempts to convert a `DetectorPriorityLevel` enum to a `PriorityLevel` enum by casting its integer value. The integer values of these two enums are not compatible (e.g., `DetectorPriorityLevel.HIGH` is 30, but 30 is not a valid value for `PriorityLevel`), which will raise a `ValueError` and crash the process whenever a new issue needs to be created. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 13 | The method `_truncate_title` is called on the wrong class, `PRCommentWorkflow`, which does not have this method. It is defined on `CommitContextIntegration`. This will raise an `AttributeError` and cause the PR commenting task to fail. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 14 | The new `TableWidgetVisualization` component is rendered with hardcoded empty data. The actual data from `tableResults` is ignored, which will cause all table widgets to be empty for users with the `use-table-widget-visualization` feature flag enabled. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 15 | The code assumes that the order of values from `nodestore.backend.get_multi(node_ids).values()` will match the order of `error_ids`. This is not guaranteed by all nodestore backend implementations (e.g., DjangoNodeStorage), leading to a mismatch between error IDs and their corresponding data. | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 16 | timezone.now() is called at class definition time, not instance creation time | High | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

| 17 | Passing a datetime in Celery task kwargs will fail JSON serialization, causing task enqueue to error. | Critical | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ | ↗ |

Key Findings for Sentry

- Perfect precision (100%) - zero false positives

- Catches Django ORM issues (negative slicing)

- Identifies Celery serialization problems

- Good at class definition time issues

- Lower recall than codex on this Python codebase

- Missed abstract method implementation issues

- Missed multiprocessing bugs

- Missed Redis command compatibility issues

How Other Tools Benchmark Code Review

Different AI code review tools use different benchmarking methodologies, which makes direct comparisons challenging. Here's how competitors approach evaluation:

Greptile's Approach

Greptile released their benchmark report using the same 5 repositories we tested (Cal.com, Sentry, Discourse, Keycloak, and Grafana). However, their methodology differs significantly:

- Dataset size: Only one issue per PR in their evaluation (50 issues vs. our 67 bugs across 50 PRs)

- Metrics: Reports only recall

- Dataset availability: To our knowledge, Greptile did not release their ground truth dataset publicly

Key Takeaways

The test covered bugs that matter: race conditions, breaking changes, security vulnerabilities, and logic errors that crash production systems.

The gap came from understanding code relationships. When a function signature changes, when a cache eviction policy creates race conditions, or when authorization checks get skipped, these bugs require seeing beyond individual lines of code.

Performance across five languages stayed consistent. That consistency matters when teams work in polyglot codebases.

3. Conclusion

entelligence achieves the best F1 score (47.2%) among all evaluated tools by balancing recall and precision

effectively.

It excels at TypeScript/JavaScript codebases (68.1% F1 on Cal.com), Schema validation errors, Async/await patterns,

Authorization logic bugs, and SQL injection vulnerabilities.

However, it struggles with some concurrency and race patterns under load, Ruby-specific patterns, Abstract method implementations, and

CSS/UI regressions.

The combination of entelligence with a tool that has higher recall on specific patterns (like Greptile for

Java/Keycloak) could provide comprehensive coverage.

We raised $5M to run your Engineering team on Autopilot

We raised $5M to run your Engineering team on Autopilot

Watch our launch video

Talk to Sales

The same class of bug

won't ship twice.

Ellie catches what AI generates wrong, learns from every incident, and gives your leaders a clear picture of what AI spend is actually returning.

Talk to Sales

Turn engineering signals into leadership decisions

Connect with our team to see how Entelliegnce helps engineering leaders with full visibility into sprint performance, Team insights & Product Delivery

Try Entelligence now